Nick Bostrom asks, Will we engineer our own extinction? Will artificial intelligence bring us utopia or destruction?

After reading about the pledge from Mark Zuckerberg, Elon Musk (who’s pledged ten million dollars in grants for academics seeking to investigate A.I. safety), Stephen Hawkins, Bill Gates… re-A.I., I was pleased to find a feature article with background information beyond all the fuss.

The article clarified what Nick Bostrom really said and his outlook for the far future of humanity.

At the end of the day, looking at the history of A.I. the fundamental issue is that no one fully understands what intelligence is, let alone how it might evolve in a machine.

Even his opponents who used the term “Frankenstein complex” to dismiss the “dystopian vision of A.I.,” concede that it is about timing and rate of progress.

Bostrom’s message can be framed as

“A lot more is said about the risks than the upsides, but that is not necessarily because the upside is not there. There is just more to be said about the risk—and maybe more use in describing the pitfalls, so we know how to steer around them—than spending time now figuring out the details of how we are going to furnish the great palace a thousand years from now.”

My key takeaway from the featured article:

I. OMENS

It all started with the Times best seller “Superintelligence: Paths, Dangers, Strategies,” by transhumanist philosopher Nick Bostrom from Oxford.

Bostrom argues that

true artificial intelligence, might pose a danger that exceeds every previous threat from technology—even nuclear weapons—and that if not managed carefully humanity risks engineering its own extinction. Central to his concern is the prospect of A.I. gaining the ability to improve itself, exceeds the intellectual potential of the human brain by many orders of magnitude and become a new kind of life.

Distinguished physicists such as Stephen Hawking have echoed its warning. Within the high caste of Silicon Valley, Bostrom has acquired the status of a sage. Elon Musk, the C.E.O. of Tesla, promoted the book on Twitter, noting,

“We need to be super careful with AI. Potentially more dangerous than nukes.”

Bill Gates recommended it, too.

Bostrom tends to see the mind as immaculate code, the body as inefficient hardware—able to accommodate limited hacks but probably destined for replacement.

Far future: what might humanity look like millions of years from now? The upper limit of survival on Earth is fixed to the life span of the sun, in five billion years. It is possible that Earth’s orbit will adjust, but more likely that the planet will be destroyed. In any case, long before then, nearly all plant life will die, oceans will boil, and the Earth’s crust will heat to a thousand degrees. In half a billion years, the planet will be uninhabitable.

From Bostrom’s office, the view of the future can be divided into 3 grand panoramas:

- humanity experiences an evolutionary leap—either assisted by technology or by merging into it and becoming software—to achieve a sublime condition that Bostrom calls “posthumanity.” Death is overcome, mental experience expands beyond recognition, and our descendants colonize the universe.

- In another panorama, humanity becomes extinct or experiences a disaster so great that it is unable to recover.

- Between these extremes, Bostrom envisions scenarios that resemble the status quo—people living as they do now, forever mired in the “human era.” It’s a vision familiar to fans of sci-fi: on “Star Trek,” Captain Kirk was born in the year 2233, but when an alien portal hurls him through time and space to Depression-era Manhattan he blends in easily.

“The very long-term future of humanity may be relatively easy to predict.”

He offers an example:

if history were reset, the industrial revolution might occur at a different time, or in a different place, or perhaps not at all, with innovation instead occurring in increments over hundreds of years. In the short term, predicting technological achievements in the counter-history might not be possible; but after, say, a hundred thousand years it is easier to imagine that all the same inventions would have emerged.

The farther into the future one looks the less likely it seems that life will continue as it is.

He favors the far ends of possibility: humanity becomes transcendent or it perishes.

Homo sapiens, since its emergence two hundred thousand years ago, has proved to be remarkably resilient, and figuring out what might imperil its existence is not obvious. Climate change is likely to cause vast environmental and economic damage—but it does not seem impossible to survive. So-called super-volcanoes have thus far not threatened the perpetuation of the species. NASA spends forty million dollars each year to determine if there are significant comets or asteroids headed for Earth. (There aren’t.)

“Natural disasters such as asteroid hits and super-volcanic eruptions are unlikely Great Filter candidates, because, even if they destroyed a significant number of civilizations, we would expect some civilizations to get lucky and escape disaster,” he argues. “Perhaps the most likely type of existential risks that could constitute a Great Filter are those that arise from technological discovery. It is not far-fetched to suppose that there might be some possible technology which is such that

(a) virtually all sufficiently advanced civilizations eventually discover it and

(b) its discovery leads almost universally to existential disaster.”

II. THE MACHINES

A.I. field was born in 1955, when 3 mathematicians and an I.B.M. programmer drew up a proposal for a project at Dartmouth, stating:

“An attempt will be made to find how to make machines use language, form abstractions and concepts, solve kinds of problems now reserved for humans, and improve themselves,”.

They recognized that success required answers to fundamental questions: What is intelligence? What is the mind?

In 1951, Alan Turing argued that at some point computers would probably exceed the intellectual capacity of their inventors, and that

“therefore we should have to expect the machines to take control.”

Whether this would be good or bad he did not say.

I. J. Good, a statistician who had worked with Turing, wrote:

“An ultraintelligent machine could design even better machines. There would then unquestionably be an ‘intelligence explosion,’ and the intelligence of man would be left far behind. Thus the first ultraintelligent machine is the last invention that man need ever make, provided that the machine is docile enough to tell us how to keep it under control. It is curious that this point is made so seldom outside of science fiction. It is sometimes worthwhile to take science fiction seriously.”

Unexpectedly, by dismissing its founding goals, the field of A.I. created space for outsiders to imagine more freely what the technology might look like:

- The scientists, steeped in technical detail, were preoccupied with making devices that worked;

- the transhumanists, motivated by the hope of a utopian future, were asking, What would the ultimate impact of those devices be?

The two communities could not have been more different.

No one fully understands what intelligence is, let alone how it might evolve in a machine. Can it grow as Good imagined, gaining I.Q. points like a rocketing stock price? If so, what would its upper limit be? And would its increase be merely a function of optimized software design, without the difficult process of acquiring knowledge through experience? Can software fundamentally rewrite itself without risking crippling breakdowns? No one knows. In the history of computer science, no programmer has created code that can substantially improve itself.

To a large degree, Bostrom’s concerns turn on a simple question of timing: Can breakthroughs be predicted?

The researcher Oren Etzioni, who used the term “Frankenstein complex” to dismiss the “dystopian vision of A.I.,” concedes Bostrom’s overarching point: that the field must one day confront profound philosophical questions.

“Nobody responsible would say you will see anything remotely like A.I. in the next five to ten years. And I think most computer scientists would say, ‘In a million years—we don’t see why it shouldn’t happen.’ So now the question is: What is the rate of progress? There are a lot of people who will ask: Is it possible we are wrong? Yes. I am not going to rule it out. I am going to say, ‘I am a scientist. Show me the evidence.’ ”

III. MISSION CONTROL

Talking about timing: deep learning is an example of breathtaking advances without any profound theoretical breakthrough.

Deep learning is a type of neural network that can discern complex patterns in huge quantities of data. For decades, researchers, hampered by the limits of their hardware, struggled to get the technique to work well. But, beginning in 2010, the increasing availability of Big Data and cheap, powerful video-game processors had a dramatic effect on performance.

Stuart Russell, the co-author of the textbook “Artificial Intelligence: A Modern Approach” and one of Bostrom’s most vocal supporters in A.I., said:

“I have been talking to quite a few contemporaries,” “Pretty much everyone sees examples of progress they just didn’t expect.”

He cited a YouTube clip of a four-legged robot: one of its designers tries to kick it over, but it quickly regains its balance, scrambling with uncanny naturalness.

“A problem that had been viewed as very difficult, where progress was slow and incremental, was all of a sudden done. Locomotion: done.”

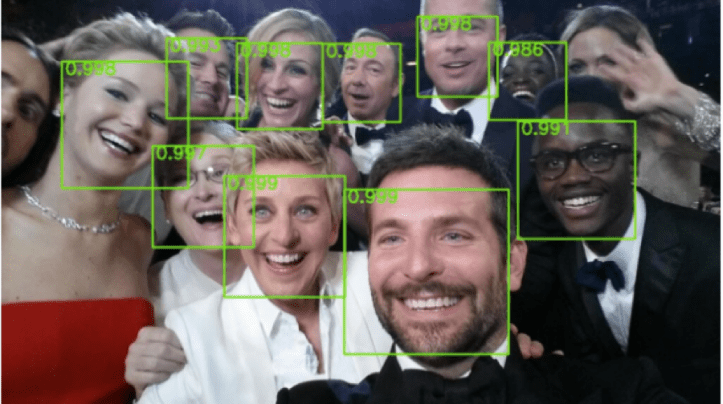

In an array of fields—speech processing, face recognition, language translation—the approach is ascendant. Researchers working on computer vision had spent years to get systems to identify objects. In almost no time, the deep-learning networks crushed their records. In one common test, using a database called ImageNet, humans identify photographs with a five-per-cent error rate; Google’s network operates at 4.8 per cent. A.I. systems can differentiate a Pembroke Welsh Corgi from a Cardigan Welsh Corgi.

Last October 2015, Tomaso Poggio, an M.I.T. researcher, gave a skeptical interview.

“The ability to describe the content of an image would be one of the most intellectually challenging things of all for a machine to do,” he said. “We will need another cycle of basic research to solve this kind of question.”

He predicted that the cycle would take at least twenty years. A month later, Google announced that its deep-learning network could analyze an image and offer a caption of what it saw:

“Two pizzas sitting on top of a stove top,” or

“People shopping at an outdoor market.”

When Poggio was asked about the results, he dismissed them as automatic associations between objects and language; the system did not understand what it saw.

“Maybe human intelligence is the same thing, in which case I am wrong, or not, in which case I was right,” he told me. “How do you decide?”

Early in Bostrom’s career, he predicted that cascading economic demand for an A.I. would build up across the fields of medicine, entertainment, finance, and defense. As the technology became useful, that demand would only grow.

“If you make a one-per-cent improvement to something—say, an algorithm that recommends books on Amazon—there is a lot of value there,” Bostrom said. “Once every improvement potentially has enormous economic benefit, that promotes effort to make more improvements.”

After decades of pursuing narrow forms of A.I., researchers are seeking to integrate them into systems that resemble a general intellect. Since I.B.M.’s Watson (reintroduced by Carrie Fisher and Ridley Scott in IBM’s Oscars ads) won “Jeopardy,” the company has committed more than a billion dollars to develop it, and is reorienting its business around “cognitive systems.” One senior I.B.M. executive declared,

“The separation between human and machine is going to blur in a very fundamental way.”

Early on, Google’s founders, Larry Page and Sergey Brin, understood that the company’s mission required solving fundamental A.I. problems. Page has said that he believes the ideal system would understand questions, even anticipate them, and produce responses in conversational language. Google scientists often invoke the computer in “Star Trek” as a model.

In recent years, Google has purchased seven robotics companies and several firms specializing in machine intelligence; it may now employ the world’s largest contingent of Ph.D.s in deep learning. Perhaps the most interesting acquisition is a British company called DeepMind, started in 2011 to build a general artificial intelligence. In 2013, they published the results of a test in which their system played seven classic Atari games, with no instruction other than to improve its score. I.B.M.’s chess program had defeated Garry Kasparov, but it could not beat a three-year-old at tic-tac-toe. In six games, DeepMind’s system outperformed all previous algorithms; in three it was superhuman. In a boxing game, it learned to pin down its opponent and subdue him with a barrage of punches. DeepMind’s chief founder, Demis Hassabis, described his company as an “Apollo Program” with a two-part mission:

“Step one, solve intelligence. Step two, use it to solve everything else.”

The patent lists a range of uses, from finance to robotics but Hassabis is clear about the challenges. DeepMind’s system still fails hopelessly at tasks that require long-range planning, knowledge about the world, or the ability to defer rewards—things that a five-year-old child might be expected to handle. The company is working to give the algorithm conceptual understanding and the capability of transfer learning, which allows humans to apply lessons from one situation to another. These are not easy problems. But DeepMind has more than a hundred Ph.D.s to work on them, and the rewards could be immense. Hassabis spoke of building artificial scientists to resolve climate change, disease, poverty.

“Even with the smartest set of humans on the planet working on these problems, these systems might be so complex that it is difficult for individual humans, scientific experts,” he said. “If we can crack what intelligence is, then we can use it to help us solve all these other problems.”

He, too, believes that A.I. is a gateway to expanded human potential.

Geoffrey Hinton, a Google employee who for decades has been a central figure in developing deep learning, discussed with Bostrom about political systems using A.I. to terrorize people. Why doing the research then?

“I could give you the usual arguments,” Hinton said. “But the truth is that the prospect of discovery is too sweet.”

He smiled awkwardly, the word hanging in the air—an echo of Oppenheimer, who famously said of the bomb,

“When you see something that is technically sweet, you go ahead and do it, and you argue about what to do about it only after you have had your technical success.”

http://www.newyorker.com/magazine/2015/11/23/doomsday-invention-artificial-intelligence-nick-bostrom

Since then, I’ve watched Nick Bostrom 2016 TED talk where he looks far less awkward than in The New Yorker description: