What I discovered or finally understood regarding AI in 2019:

AI bar – yay, not only the AI bar accurately identifies the right order in which people arrived at the bar to place their order (which is fair); it is, by doing so, removing the human bias from bartender who tend to pay attention to good people 😉

AI hiring – AI is everywhere, including in hiring, which means potential unconscious bias (see my previous post on this). WSJ shared some top tips to get past the robots.

and Can you make AI fairer than a judge? Play MIT courtroom algorithm game:

“Predictions reflect the data used to make them—whether by algorithm or not. If black defendants are arrested at a higher rate than white defendants in the real world, they will have a higher rate of predicted arrest as well. This means they will also have higher risk scores on average, and a larger percentage of them will be labeled high-risk—both correctly and incorrectly.” […]

This strange conflict of fairness definitions isn’t just limited to risk assessment algorithms in the criminal legal system. The same sorts of paradoxes hold true for credit scoring, insurance, and hiring algorithms. […]

There is no algorithm that can fix this; this isn’t even an algorithmic problem, really. Human judges are currently making the same sorts of forced trade-offs—and have done so throughout history. But here’s what an algorithm has changed. Though judges may not always be transparent about how they choose between different notions of fairness, people can contest their decisions. In contrast, COMPAS, which is made by the private company Northpointe, is a trade secret that cannot be publicly reviewed or interrogated. […] There is no more public accountability.

AI to save lifes – Using AI to spot anyone struggling/drowning in a swimming pool

Machine Learning 101 – Teach a machine. Looooove it and finally understood how ML works https://teachablemachine.withgoogle.com/

Lobe Is A Machine Learning Platform For Complete Idiots – From Apple, Facebook, and Nest alum Mike Matas, Lobe makes it so you don’t need to be a data scientist to build incredible AI tools.

And here’s the AI for dummies playbook from Hyper Island students.

Hear the world’s first genderless AI voice speak (tackling our biases – see my previous post)

Mind reading technology possible, AI brain implants next – AI can now accurately read our thoughts and turn them into words and images

Ethics of AI – my favorite podcast 99% invisible and the ELIZA effect. The story of a simple chatbot named ELIZA, created by MIT Professor Weizenbaum in the 1960s, shows that humans are more comfortable opening up in therapy with chatbots (as Elli, the AI psychoanalyst for veterans more likely to open up to a robot than a human psychologist highlighted by Simon James in his SXSW 2017 talk in my previous post). The podcast also mentions OpenAI not releasing their full code (because GPT-2 (its AI writer) was too good at writing – scroll down to see my take on The New Yorker and OpenAI experiment)

Ethics are a significant consideration in AI and a significant part of the 23 Asilomar AI Principles developed at the Asilomar Conference on Beneficial AI in 2017 in Pacific Grove, California. The conference was organized by the Future of Life Institute, which is a non-profit organization whose mission statement is “to catalyze and support research and initiatives for safeguarding life and developing optimistic visions of the future, including positive ways for humanity to steer its own course considering new technologies and challenges.” The organization was founded in 2014 by MIT cosmologist Max Tegmark, Skype co-founder Jaan Tallinn, physicist Anthony Aguirre, Viktoriya Krakovna and Meia Chita-Tegmark. As of this writing, 1,273 artificial intelligence and robotics researchers have signed onto the principles, as well as with 2,541 other endorsers from a variety of industries.

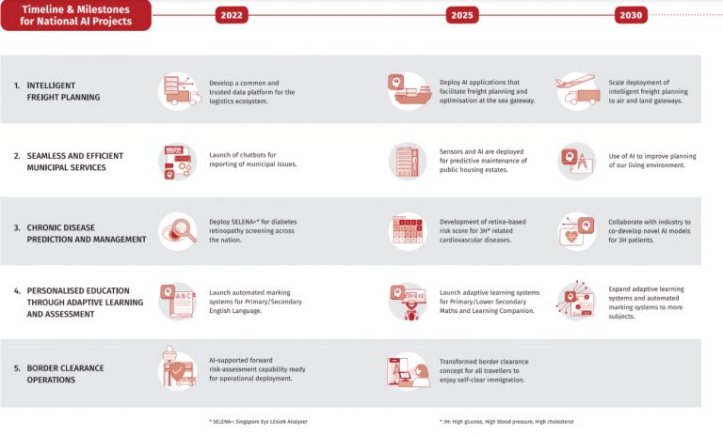

Smart Nation Singapore launched a National AI Strategy that will ‘transform’ the country by 2030. It includes 5 major plans:

- Intelligent freight planning

- Estate management services

- Chronic disease prediction & management

- Personalised education

- Border clearance operations

Machine Yearning – Holly Herndon’s search for a new art form for our tech obsessions. For Holly Herndon, a laptop is “the most intimate instrument that we’ve ever seen.” She recently completed her Ph.D. at Stanford University’s Center for Computer Research in Music and Acoustics. Her new album, “PROTO,” is an artificial neural network; she has been teaching this “AI baby” called Spawn to sing. Herndon’s desire to see technology as neither the best thing ever nor the worst marks her as unusual, given the divisiveness and normalized dread of our times. It feels optimistic to present A.I. as a way of making us feel more human.

The New-Yorker article asks: How schemes to engineer chart success, based on a knowledge of how to manipulate clunky genre categories, or song length, will affect the sound of pop? and notices that “algorave” have emerged in Europe: dance parties with “live coding,” including displays of strings of code being edited in real time. Much of the music coming out of the algorave world resembles that of Autechre and Aphex Twin.

The New Yorker and OpenAI: The Next Word – Where will predictive text take us?

A seminal New Yorker article (and experiment with OpenAI) articulating the history of AI in simple terms including how Gmail Smart compose works, how Grammarly was founded (funnily Grammarly cited 109 grammatical “correctness issue in this New-Yorker’s perfect copy which probably made the excellent editor grinch).

Can a Machine Learn to Write for The New Yorker? Extraordinary advances in machine learning in recent years have resulted in A.I.s that can write for you.

In Feb 2019, OpenAI (non profit founded in 2015 by Greg Brockman, ex-CTO of Stripe, Elon Musk, Sam Altman of Y Combinator, Ilya Sutskever ex-Google Brain; with funding from Peter Thiel, Reid Hoffman) delayed the full version of GPT-2 (its AI writer) because the machine was too good at writing. 3 less powerful versions were made available in Feb, May and August 2019.

The experiment: OpenAI fine-tuned GPT-2 on The New-Yorker’s digital archives (all nonfiction work since 2007 with some classics going back to the 1960s), millions of polished and fact-checked words, many written by masters of the literary art. Could the machine learn to write well enough for The New Yorker? It would take a human 2 weeks (24/7) and the AI computed the archive under 1 hour.

I love the fact that

“the neural net categorised distinctive aspects of New Yorker prose – the words its writers tended to favor, the magazine’s rhythms, its distinctive style of narrative rhetoric, its voice – and the learning algorithm used these data to automatically adjust the neural net’s settings, so that its predictions leaned towards New Yorker locutions.”

If this isn’t a love letter and definition of The New Yorker’s brand, I don’t know what is.

The result: Using the 1950 Profile of Ernest Hemingway as a prompt –

Ernest Hemingway, who may well be the greatest living American novelist and short-story writer, rarely comes to New York. He spends most of his time on a farm, the Finca Vigia, nine miles outside Havana, with his wife, a domestic staff of nine, fifty-two cats, sixteen dogs, a couple of hundred pigeons, and three cows.

The AI system responded with the following generated text –

I walked up the path to the fence and saw a plump dog, that had been a common visitor to the Finca Vigia before the war, galloping up a path to the main building, with a tiny cow of the same name standing by her side. There was a puddle of red gravy in the front yard, and Hemingway sat down in a lawn chair.

Much of the Twitter comments on the experiment focused on what AI researchers call “world-modelling failures” (the tiny cows and puddle of red gravy) yet

“it sounded like a familiar, trusted voice that I was able inclined to believe. In fact, it sounded sort of like my voice” said the author John Seabrook.

After few hours, it hurt to see:

“an idiot savant that used math for words. It was sickening to see how the slithering machine intelligence, with its ability to take on the color of the prompt prose, slipped into some of my favorite paragraphs, impersonating their voices but without their souls”.

GPT-2 was like a 3-year old prodigiously gifted with the illusion, at least, of college-level writing ability. But even a child prodigy would have a goal in writing; the machine’s only goal is to predict the next word. It can’t sustain a thought, because it can’t think causally.